Motivation1

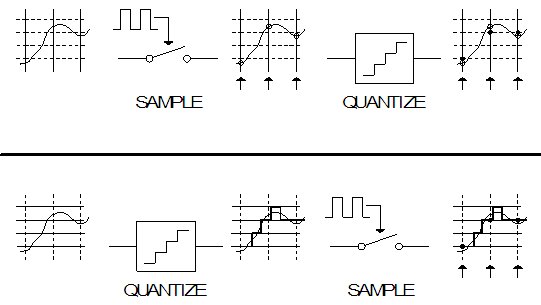

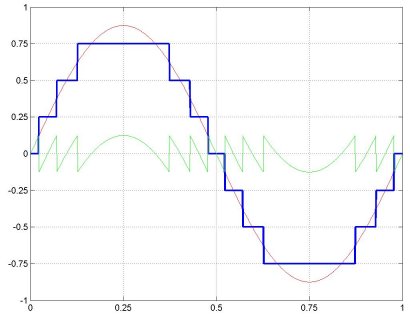

Conventional digital signal processing (DSP) samples the input, then quantizes the amplitude value at the discrete sampling time into a finite-bit representation. There are two processes here: amplitude quantization and time discretization. The former is the essence of DSP, using a finite number of 'bits' to represent the analog input signal. The latter is an effect of using a synchronous (clocked) system, that updates internal states at regular intervals. The effect of amplitude quantization is, for an example case of a sinusoidal input, to introduce harmonic distortion (as demonstrated below in Fig. 3). The effect of sampling is aliasing, or repetitive folding of the signal spectra. What is critical to note is that even though conventional DSP samples and then quantizes, if you reverse the order of operations (quantize in a continuous-time (CT) fashion and then sample), the result is the same (pause to consider this! If still unclear, see Fig 4. at the bottom of this page). In Fig. 1 the spectra of a single sinusoid is shown: quantized and then sampled (Fig. 2 shows time-domain waveforms of these signals, using 3 bits of amplitude quantization). This order of operations makes it clear that the sampling operation introduces a significant amount of distortion into lower frequencies, by aliasing down the distortion introduced by the amplitude quantization. As a highlighting example, for a system of interest that is of a low-pass nature, consider the amount of distortion in any given bandwidth in the middle versus right-most plots of Fig. 1. The plot on the right has the same amount of harmonic distortion as the center plot, plus additional aliased harmonic distortion. This means that for any bandwidth, the center plot is guaranteed to have a higher signal-to-distortion ratio (SDR) than the plot on the right. Note that the example shown in Fig. 1 is assuming no blockers, noise, or any other form of corruption. If there were, a normal system would use an anti-aliasing filter so that after sampling out-of-band blockers would not alias down into the band of interest, but the aliasing occurs (in Fig. 1) only after the quantization, so if processing is performed on solely the post-quantization but pre-sampling signal, out-of-band blockers will not alias down and corrupt the signal, a significant advantage. Taking advantage of this (guaranteed higher SDR by not aliasing down the distortion caused by quantization, and not aliasing out-of-band blockers) is the motivation to move to continuous-time DSP.

Fig. 1 Progression of spectra of input sinusoid: quantize then sample.

Fig. 1 Progression of spectra of input sinusoid: quantize then sample.

Fig. 3 Output spectrum of a CT-DSP (top) vs. a conventional (synchronous) DSP (bottom) for a 1 kHz input sinusoid where the DSP was a first-order LPF with a 3 kHz corner frequency. 8-bits of amplitude quantization, sampling in bottom plot is at 44.1 kHz.

Fig. 3 Output spectrum of a CT-DSP (top) vs. a conventional (synchronous) DSP (bottom) for a 1 kHz input sinusoid where the DSP was a first-order LPF with a 3 kHz corner frequency. 8-bits of amplitude quantization, sampling in bottom plot is at 44.1 kHz.